Recently I have been spending a good amount of time reading about Generative AI. I shared the list of resources I used here.

To me, the best way to learn a new technology is to build something using that technology. Azure Docs Copilot is my attempt at applying my learnings about this incredible technology into building something tangible and useful.

What is Azure Docs Copilot?

In simple terms, Azure Docs Copilot is a Generative AI app that lets users search Azure documentation using natural language. A user could ask questions about Azure like:

- Name different kinds of blobs in Azure Storage.

- What is the maximum size of the partition key in Cosmos DB?

- Explain vector search capability in Azure Cognitive Search.

Concepts used in building this application can be used to build AI applications that enables natural language search on any private data.

How it works?

In the current version, it’s a very simple application that makes use of Retrieval Augmented Generation (RAG) technique to perform similarity search on a vector database containing the contents of Azure documentation.

The result of the similarity search and the user’s question are then fed to a Large Language Model (LLM) which provides an answer to the user’s question only based on the results of the similarity search.

Through proper prompting, LLM is instructed only to use the information from similarity search results (context) when generating an answer.

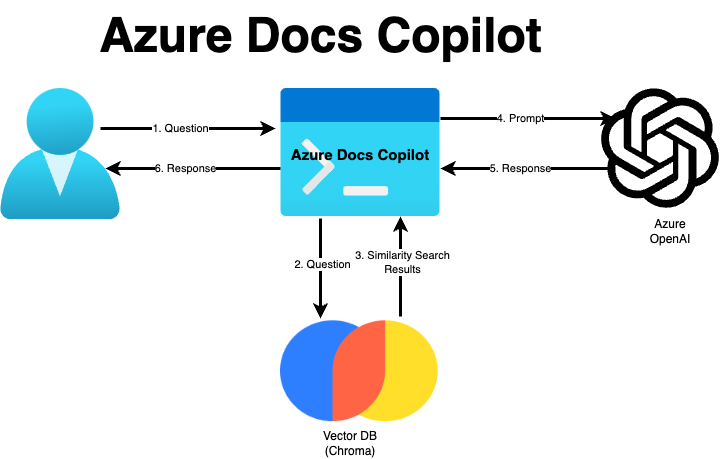

Following diagram shows the overall architecture of the application.

Here’s how it works:

- User asks a question to the application.

- The question is sent to a Vector Database where a similarity search is performed on the user’s question.

- Vector database returns matching results to the application.

- Application then combines the matching results and the user question and sends it to the LLM.

- LLM prepares a response based on the prompt and sends it back to the application.

- User is shown the response.

Notable points

- The application is a command-line application built using Python 3.

- For vector database, Chroma is used. There are many vector databases available in the market today like Azure Cognitive Search, Qdrant, Pine Cone etc. I picked Chroma simply because it lets me save the database locally.

- LangChain was used for building the app. Other options I would want to explore are Microsoft Semantic Kernel (I wrote a blog post about it here) and LlamaIndex for building such applications.

- Azure OpenAI is used for the Generative AI part of this application. I used GPT 3.5 based deployment in Azure OpenAI.

- The application is multi-lingual by default (something I discovered accidentally). In my limited testing, I asked a question in Spanish and to my surprise I got the correct answer back in Spanish only.

Source code

The source code for the application is available on GitHub. You can download the code from here: https://github.com/gmantri/azure-docs-copilot. Setup instructions can be found in README.md file.

The code is a lot crappy at the moment and does not follow the coding standards recommended for Python development so please bear with me. In subsequent versions of the app, I fully intend to improve the code.

Considering the code is open sourced, you are more than welcome to contribute to this repository.

Future direction

There are a lot of things on my roadmap that I would like to do. Some of the things I would like to do are:

- Improve the code.

- Use Azure Cognitive Search as vector store.

- Make use of GitHub’s API to read the documentation files programmatically. Currently the process is manual.

- Convert the application into a web-based application.

- Update the vector database automatically and incrementally as and when Azure documentation is updated. Currently the process is manual where you have to download the documentation files and populate the vector database from scratch.

Closing

That’s it for this post. Please give this app a try and let me know your feedback. I am looking forward to hear your thoughts.

Cheers!