In this post, I’ll walk you through a simple yet effective approach we use at Purple Leaf to ensure our application stay online, even when Azure OpenAI service faces throttling or downtime. By deploying Azure OpenAI in multiple regions and implementing a smart failover strategy, we’re able to provide a seamless experience for our users, regardless of unexpected service disruptions.

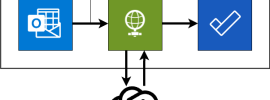

Here’s how it works:

1. Deploy Azure OpenAI Across Multiple Regions

We deploy Azure OpenAI service in at least two regions — one as the primary and another as the secondary.

2. Failover Logic

With both regions set up, our application needs a way to switch smoothly between them. For example, if primary region fails to process a request, the system should automatically retry the request with the secondary region. This approach ensures that, even if one service instance is down or throttled, your application can continue running without significant interruptions.

By deploying Azure OpenAI service across multiple regions and integrating this failover strategy, we protect our application from unexpected downtime and keep our users happy.

In the next sections, I’ll provide sample code to demonstrate how to implement this approach effectively.

Code

01 02 03 04 05 06 07 08 09 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 | export default class AzureOpenAIHelper { private readonly _azureOpenAIPrimaryClient: AzureOpenAI; private readonly _azureOpenAISecondaryClient: AzureOpenAI; constructor() { this._azureOpenAIPrimaryClient = new AzureOpenAI({ endpoint: "primary-end-point", apiKey: "primary-end-point-api-key", deployment: "primary-deployment-id", apiVersion: "primary-api-version", }); this._azureOpenAISecondaryClient = new AzureOpenAI({ endpoint: "secondary-end-point", apiKey: "secondary-end-point-api-key", deployment: "secondary-deployment-id", apiVersion: "secondary-api-version", }); } async processChatRequest(systemMessage: string, userMessage: string, isPrimary: boolean = true, attempt: number = 1) { try { const client = isPrimary ? this._azureOpenAIPrimaryClient : this._azureOpenAISecondaryClient; const messages = [ { role: 'system', content: systemMessage }, { role: 'user', content: userMessage} ] const result = await client.chat.completions.create({ messages, model: model, response_format: { type: 'text' }, }, {}, ); return result; } catch (error: unknown) { if (attempt < 4) { return await this.processChatRequest( systemMessage, userMessage, !isPrimary, attempt + 1, ); } else { throw err; } } }} |

In the class constructor, we create instances of both primary and secondary Azure OpenAI services.

processChatRequest method makes the call to the Azure OpenAI service. By default this method connects to the primary service.

If the call fails for whatever reason, we execute the same method but this time we are connecting to the secondary service. If the call fails again, we fall back to primary.

We do this a limited number of times (4) before we give up and throw an exception.

Summary

When working with services like Azure OpenAI, throttling and downtime can potentially bring your entire application down. That’s why having a plan for redundancy isn’t just nice to have — it’s essential.

By deploying Azure OpenAI in multiple regions and using a simple failover strategy, you’re not only adding a layer of resilience but also ensuring your app keeps running smoothly, even when things go wrong.

This setup has been a super helpful for us at Purple Leaf, helping us deliver a stable, reliable experience without leaving our users hanging.

I hope you have found this blog post useful. Please do share your thoughts on how you are handling Azure OpenAI service issues.