Yesterday, I wrote a blog post about how you can copy an object (file) from Amazon S3 to Windows Azure Blob Storage using improved “Copy Blob” functionality. You can read that post here: https://gauravmantri.com/2012/06/14/how-to-copy-an-object-from-amazon-s3-to-windows-azure-blob-storage-using-copy-blob/. At the end of that post, I mentioned that the functionality covered in the post can be extended to copy all objects from a bucket in Amazon S3 to Windows Azure Blob Storage and if no body beats me to it, I will write a sample application for that. So here I’m with that ![]() .

.

I spoke enough about this “Copy Blob” functionality in my previous posts, so we will jump right into the code (Nuff Talking, I guess!!!) ![]() . I built a small console application to copy all objects from a Bucket to a Blob Container.

. I built a small console application to copy all objects from a Bucket to a Blob Container.

Prerequisites

Like previous post, there are a few things you would need to do before you could actually run the code below:

- Create a new storage account: Please note that this functionality will only work if your storage account is created after 7th June 2012. So if you have a storage account which was created after this date, you can use that storage account or head over to new and improved Windows Azure Portal and create a new storage account. Have your account name and key handy.

- Get the latest storage client library: At the time of writing of this blog, the officially released SDK version was 1.7. Unfortunately this functionality is not available in that version. You would need to get version 1.7.1 which you can get from GitHub. Get the source code and compile it.

- Source object/blob is accessible in the bucket: Based on the blog post above, Copy Blobs functionality can copy a blob from outside of Windows Azure which is publicly accessible in some shape or form. It could be that the object has READ permission on it or has a Shared Access Signature like URL (called Presigned Object URL) which would grant temporary read access to the object. You could use Amazon’s SDK to create these URLs on the fly. Please refer to http://docs.amazonwebservices.com/AmazonS3/latest/dev/ShareObjectPreSignedURLDotNetSDK.html for instructions on how to do so using Amazon’s SDK for .Net.

- Amazon SDK for .Net: You would need to download Amazon SDK for .Net which you can download from their website at http://aws.amazon.com/sdkfornet/.

- Have Amazon Credentials ready: You would need to have Amazon AccessKey and SecretKey ready as they are used to list objects from a bucket in Amazon S3.

The Code

The code is really simple and is shown below. Please note that the code below is just for demonstration purpose. Feel free to modify the code to meet your requirements.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using Amazon.S3;

using Amazon.S3.Model;

using Amazon.S3.Transfer;

using Amazon.S3.Util;

using Microsoft.WindowsAzure;

using Microsoft.WindowsAzure.StorageClient;

using System.Globalization;

namespace CopyAmazonBucketToBlobStorage

{

class Program

{

//Windows Azure Storage Account Name

private static string azureStorageAccountName = "<Your Windows Azure Storage Account Name>";

//Windows Azure Storage Account Key

private static string azureStorageAccountKey = "<Your Windows Azure Storage Account Key>";

//Windows Azure Blob Container Name (Target)

private static string azureBlobContainerName = "<Windows Azure Blob Container Name>";

//Amazon Access Key

private static string amazonAccessKey = "<Your Amazon Access Key>";

//Amazon Secret Key

private static string amazonSecretKey = "<Your Amazon Secret Key>";

//Amazon Bucket Name (Source)

private static string amazonBucketName = "<Amazon Bucket Name>";

private static string objectUrlFormat = "https://{0}.s3.amazonaws.com/{1}";

//This dictionary will keep track of progress. The key would be the URL of the blob.

private static Dictionary<string, CopyBlobProgress> CopyBlobProgress;

static void Main(string[] args)

{

//Create a reference of Windows Azure Storage Account

CloudStorageAccount azureCloudStorageAccount = new CloudStorageAccount(new StorageCredentialsAccountAndKey(azureStorageAccountName, azureStorageAccountKey), true);

//Get a reference of Blob Container where files (objects) will be copied.

var blobContainer = azureCloudStorageAccount.CreateCloudBlobClient().GetContainerReference(azureBlobContainerName);

//Create blob container if needed

Console.WriteLine("Trying to create the blob container....");

blobContainer.CreateIfNotExist();

Console.WriteLine("Blob container created....");

//Create a reference of Amazon Client

AmazonS3Client amazonClient = new AmazonS3Client(amazonAccessKey, amazonSecretKey);

//Initialize dictionary

CopyBlobProgress = new Dictionary<string, CopyBlobProgress>();

string continuationToken = null;

bool continueListObjects = true;

//Since ListObjects return a maximum of 1000 objects in a single call

//We'll call this function again and again till the time we fetch the

//list of all objects.

while (continueListObjects)

{

ListObjectsRequest requestToListObjects = new ListObjectsRequest()

{

BucketName = amazonBucketName,

Marker = continuationToken,

MaxKeys = 100,

};

Console.WriteLine("Trying to list objects from S3 Bucket....");

//List objects from Amazon S3

var listObjectsResponse = amazonClient.ListObjects(requestToListObjects);

//Get the list of objects

var objectsFetchedSoFar = listObjectsResponse.S3Objects;

Console.WriteLine("Object listing complete. Now starting the copy process....");

//See if more objects are available. We'll first process the objects fetched

//and continue the loop to get next set of objects.

continuationToken = listObjectsResponse.NextMarker;

foreach (var s3Object in objectsFetchedSoFar)

{

string objectKey = s3Object.Key;

//Since ListObjects returns both files and folders, for now we'll skip folders

//The way we'll check this is by checking the value of S3 Object Key. If it

//end with "/" we'll assume it's a folder.

if (!objectKey.EndsWith("/"))

{

//Construct the URL to copy

string urlToCopy = string.Format(CultureInfo.InvariantCulture, objectUrlFormat, amazonBucketName, objectKey);

//Create an instance of CloudBlockBlob

CloudBlockBlob blockBlob = blobContainer.GetBlockBlobReference(objectKey);

var blockBlobUrl = blockBlob.Uri.AbsoluteUri;

if (!CopyBlobProgress.ContainsKey(blockBlobUrl))

{

CopyBlobProgress.Add(blockBlobUrl, new CopyBlobProgress()

{

Status = CopyStatus.NotStarted,

});

//Start the copy process. We would need to put it in try/catch block

//as the copy from Amazon S3 will only work with publicly accessible objects.

try

{

Console.WriteLine(string.Format("Trying to copy \"{0}\" to \"{1}\"", urlToCopy, blockBlobUrl));

blockBlob.StartCopyFromBlob(new Uri(urlToCopy));

CopyBlobProgress[blockBlobUrl].Status = CopyStatus.Started;

}

catch (Exception exception)

{

CopyBlobProgress[blockBlobUrl].Status = CopyStatus.Failed;

CopyBlobProgress[blockBlobUrl].Error = exception;

}

}

}

}

Console.WriteLine("");

Console.WriteLine("==========================================================");

Console.WriteLine("");

Console.WriteLine("Checking the status of copy process....");

//Now we track the progress

bool checkForBlobCopyStatus = true;

while (checkForBlobCopyStatus)

{

//List blobs in the blob container

var blobsList = blobContainer.ListBlobs(true, BlobListingDetails.Copy);

foreach (var blob in blobsList)

{

var tempBlockBlob = blob as CloudBlob;

var copyStatus = tempBlockBlob.CopyState;

if (CopyBlobProgress.ContainsKey(tempBlockBlob.Uri.AbsoluteUri))

{

var copyBlobProgress = CopyBlobProgress[tempBlockBlob.Uri.AbsoluteUri];

if (copyStatus != null)

{

Console.WriteLine(string.Format("Status of \"{0}\" blob copy....", tempBlockBlob.Uri.AbsoluteUri, copyStatus.Status));

switch (copyStatus.Status)

{

case Microsoft.WindowsAzure.StorageClient.CopyStatus.Aborted:

if (copyBlobProgress != null)

{

copyBlobProgress.Status = CopyStatus.Aborted;

}

break;

case Microsoft.WindowsAzure.StorageClient.CopyStatus.Failed:

if (copyBlobProgress != null)

{

copyBlobProgress.Status = CopyStatus.Failed;

}

break;

case Microsoft.WindowsAzure.StorageClient.CopyStatus.Invalid:

if (copyBlobProgress != null)

{

copyBlobProgress.Status = CopyStatus.Invalid;

}

break;

case Microsoft.WindowsAzure.StorageClient.CopyStatus.Pending:

if (copyBlobProgress != null)

{

copyBlobProgress.Status = CopyStatus.Pending;

}

break;

case Microsoft.WindowsAzure.StorageClient.CopyStatus.Success:

if (copyBlobProgress != null)

{

copyBlobProgress.Status = CopyStatus.Success;

}

break;

}

}

}

}

var pendingBlob = CopyBlobProgress.FirstOrDefault(c => c.Value.Status == CopyStatus.Pending);

if (string.IsNullOrWhiteSpace(pendingBlob.Key))

{

checkForBlobCopyStatus = false;

}

else

{

System.Threading.Thread.Sleep(1000);

}

}

if (string.IsNullOrWhiteSpace(continuationToken))

{

continueListObjects = false;

}

Console.WriteLine("");

Console.WriteLine("==========================================================");

Console.WriteLine("");

}

Console.WriteLine("Process completed....");

Console.WriteLine("Press any key to terminate the program....");

Console.ReadLine();

}

}

public class CopyBlobProgress

{

public CopyStatus Status

{

get;

set;

}

public Exception Error

{

get;

set;

}

}

public enum CopyStatus

{

NotStarted,

Started,

Aborted,

Failed,

Invalid,

Pending,

Success

}

}

The code is pretty straight forward. What the application does is:

- Creates a blob container in Windows Azure Storage (if needed).

- Lists the objects from Amazon S3 bucket. Note that Amazon S3 will return up to a maximum of 1000 objects at a time. It returns you a “Next Marker” if there are more objects available to be fetched. You would need to use this “Next Marker” to get next set of objects.I did a blog post comparing Windows Azure Blob Storage and Amazon S3 some days back where I talked about this. You can read about it there: https://gauravmantri.com/2012/05/09/comparing-windows-azure-blob-storage-and-amazon-simple-storage-service-s3part-i/

- For each object returned, URL of the object is constructed and then “Copy Blob” functionality is invoked by passing this URL. For the sake of simplicity, in the code I assumed that the object is publicly accessible. If the object is not publicly accessible, then you would need to create a “Pre-signed URL” with “Read” permissions on that object.

- Application then checks the status of copy operations. Once all the pending copy operations have been completed, Steps 2 – 4 are repeated till the time all objects are fetched from Amazon S3 bucket and copied over.

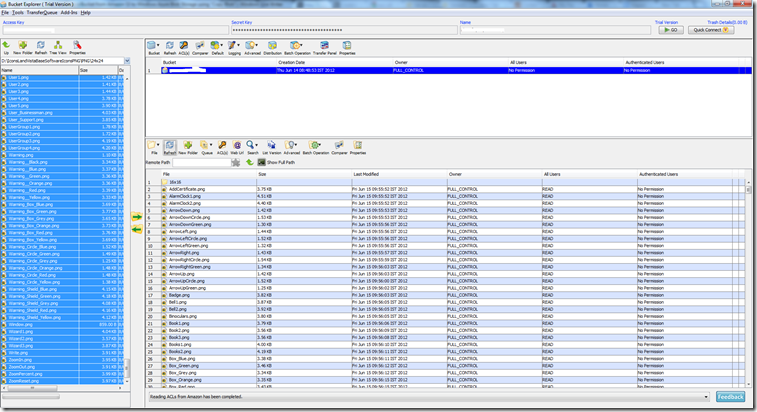

I used Bucket Explorer to manage contents in Amazon S3. Here’s how the content looks like there:

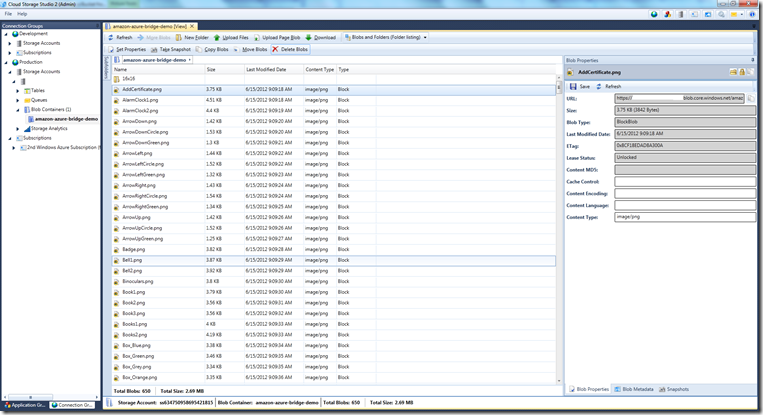

After copying, I can see these blobs in Windows Azure Blob Storage using Cloud Storage Studio.

Summary

As you saw, Windows Azure has made it real easy for you to bring stuff into Windows Azure. If you’re considering moving from other cloud platform to Windows Azure and are somewhat concerned about onboarding friction, this is one step towards reducing that friction.

I’ve created a simple example, wherein I just copied contents of a bucket from Amazon S3 to a blob container in Windows Azure Blob Storage.

I hope you have found this information useful. If you think I have made some errors and provided some incorrect information, please feel free to correct me by providing comment. I’ll fix those issues ASAP.

Stay tuned for more Windows Azure related posts. So Long!!!